Lesson Plan for Week 9

Objectives

With Xarray we have now introduced the last tool for processing and analyzing data. We will continue the practice with Xarray to introduce more aggregation and processing similar to what we have done in pandas.

After that we will shift focus more to modelling and actual data analysis. Like I have done before, we will also use other datasets including satellite data.

Specific learning goals

Technical

- Opening and reading datasets using xarray

- Selecting and subsetting variables from an xarray- dataset.

- Plotting xarray data on maps.

New:

- Use the

.coarsen()aggregation function to downsample data.

Weather and Climate System

- explain how topography impacts local weather

- understand differences between local observations and spatially averaged gridded climate products

- recognize the importance of high resolution climate data for local and regional climate adaptation

- compare and contrast precipitation patterns near Harrisonburg at different resolutions

- be able to relate this to questions surrounding gridded data in general and for your semester project

Class Preparation

Readings and Materials

Background

- Abernathy: Maps in Scientific Python

- We won’t discuss this explicitly, but this is a bit of background on working with maps in python. This provides a bit of background on why this matters and how this is practically done in python.

- EarthHow: How Does Topography Affect Climate?

- This is a brief and easy overview of how topography and geography affects weather and climate including rainfall and temperatures

- Jong et al. (2023): Increases in extreme precipitation over the Northeast United States using high-resolution climate model simulations, npj Climate and Atmospheric Science, 6, 18, https://doi.org/10.1038/s41612-023-00347-w

- You don’t have to read this article, but it shows how modeled extreme precipitation depends on model resolution.

Data:

- UCAR Climate Data Guide for PRISM

- PRISM (Parameter elevation Regression on Independent Slopes Model) is a set of monthly yearly and daily extrapolated weather data of mean temperature and precipitation, max/min temperatures, and dew points, primarily for the United States. The PRISM products use a weighted regression scheme to account for complex climate regimes associated with orography, rain shadows, temperature inversions, slope aspect, coastal proximity, and other factors.

Planned Agenda

Monday:

Finalize demonstration for

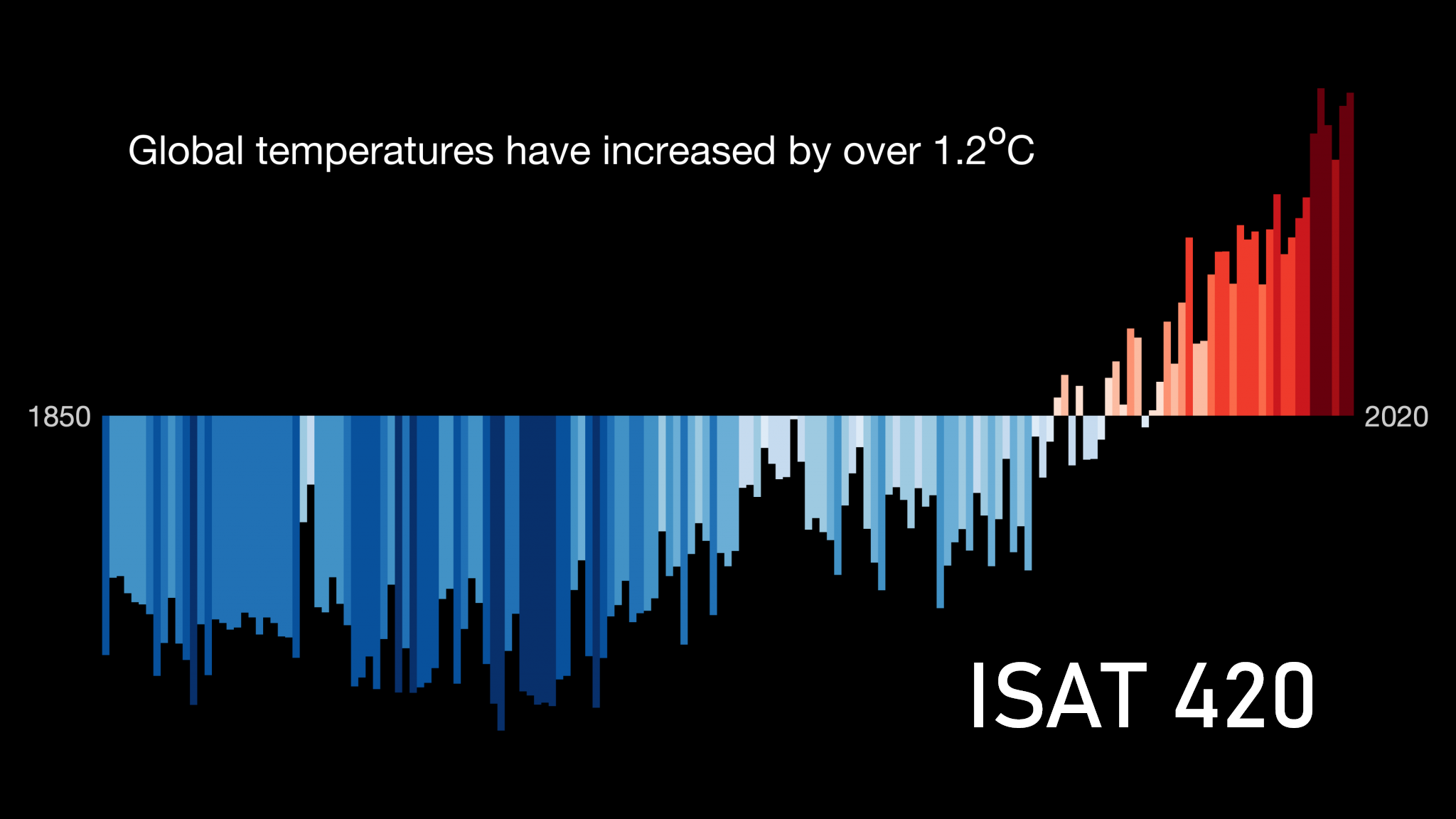

xarrayMini-lecture: Importance of resolution

(if time): Working with APIs

Wednesday:

- Check-in:

- Learning Reflection 2

- Fixing Environments

- Skills Check

- Demonstration/ Exercise on model resolution.

- (postponed to week 10): More xarray: Grouping, Anomaly Calculation, Correlation, …

Activities: Fixing Environments

We need to make sure that we have working python environments that include all the necessary software.

These are all the packages that you should have installed for week 10. New additions for week 10 are pooch, s3fs, and boto3. seaborn, h5netcdf and openpyxl may be relevant in coming weeks.

name: ISAT_420

channels:

- conda-forge

- defaults

dependencies:

- dask

- jupyter

- matplotlib

- netcdf4

- numpy

- openpyxl

- pandas

- scipy

- seaborn

- xarray

- h5netcdf

- cdsapi

- cartopy

- pooch

- s3fs

- boto3

- geopandasYou can find all these packages in the updated environment.yml in the shared repository: ISAT_420_S26_Shared\environment.yml.

To update our environment we have 3 options:

- Manually install the missing libraries

- Activate the environment:

conda activate ISAT_420_S26conda install <package names>

- Activate the environment:

- Preferred: use conda to update our existing course environment

- Update the existing environment: Anaconda: Managing Environments

- Nuclear Option: Start over and create a new course environment

- Delete the current

ISAT_420_S26conda environmentconda remove --name myenv --all - Create a new one: Anaconda: Managing Environments

- Delete the current

Skills Check: Tools

On the horizon (after Spring Break)

Some things that will come up soon.

- Learning Reflection 2

- Keep up with learning notes

- Semester project

- Now that you can open files such as

.ncwith Xarray. What other datasets might be useful?

- Now that you can open files such as

- Data pipelines and reproducibility

- At some point after the break, we will circle back and talk about APIs.